Automonmous Robot Manipulator

CSCI3302 Final project

Project Overview

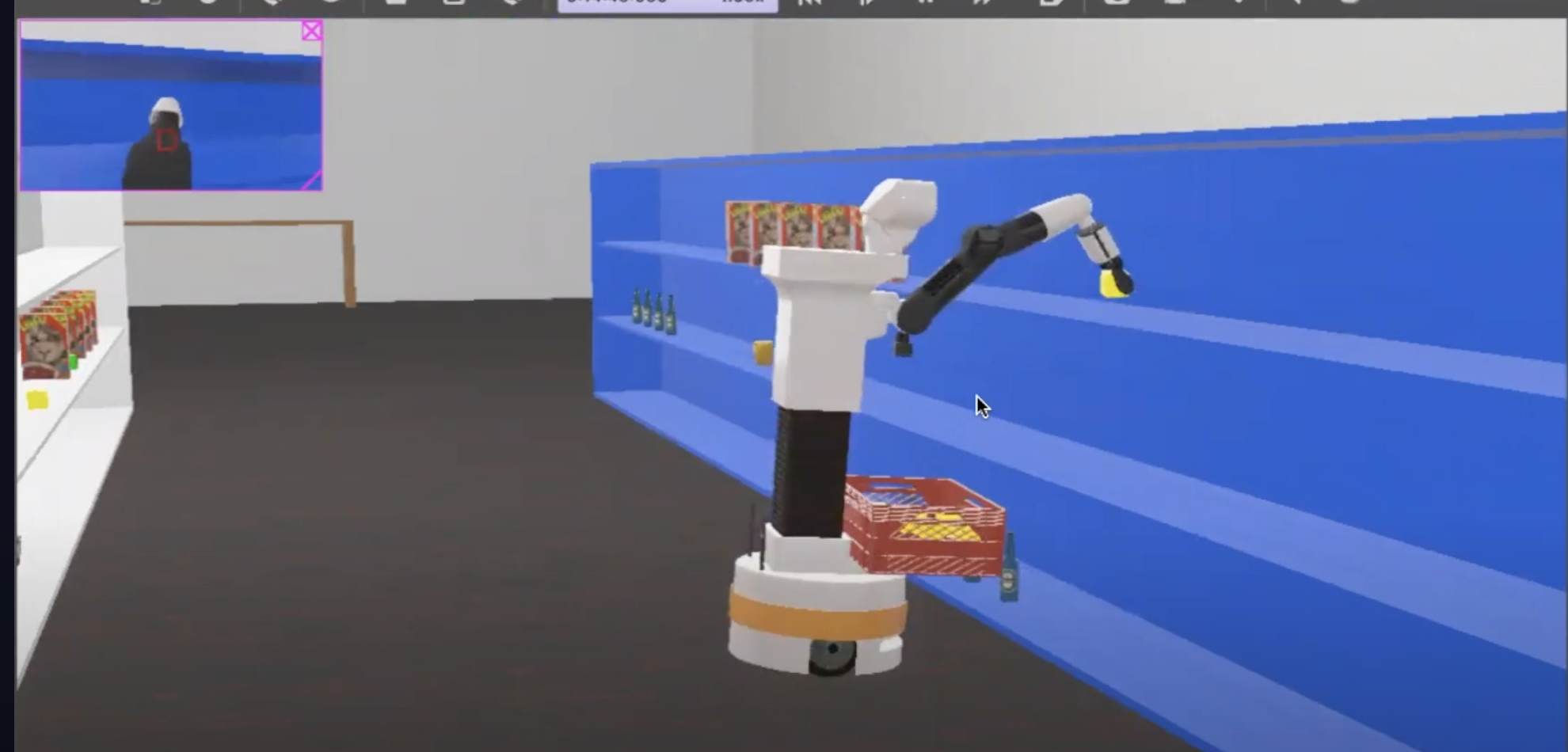

This project showcases the design and implementation of a fully autonomous mobile manipulator using the TIAGo robot platform.

The robot is capable of scanning, mapping, navigating, and retrieving objects in a simulated 3D supermarket environment, combining perception, path planning, and manipulation.

The core focus was on developing robust autonomy using LiDAR-based SLAM, RRT path planning, and inverse kinematics — with 90% object retrieval accuracy.

Programmed a TIAGo robot to autonomously scan, map, and retrieve objects from a 3D supermarket environment with 90% accuracy.

Key Features

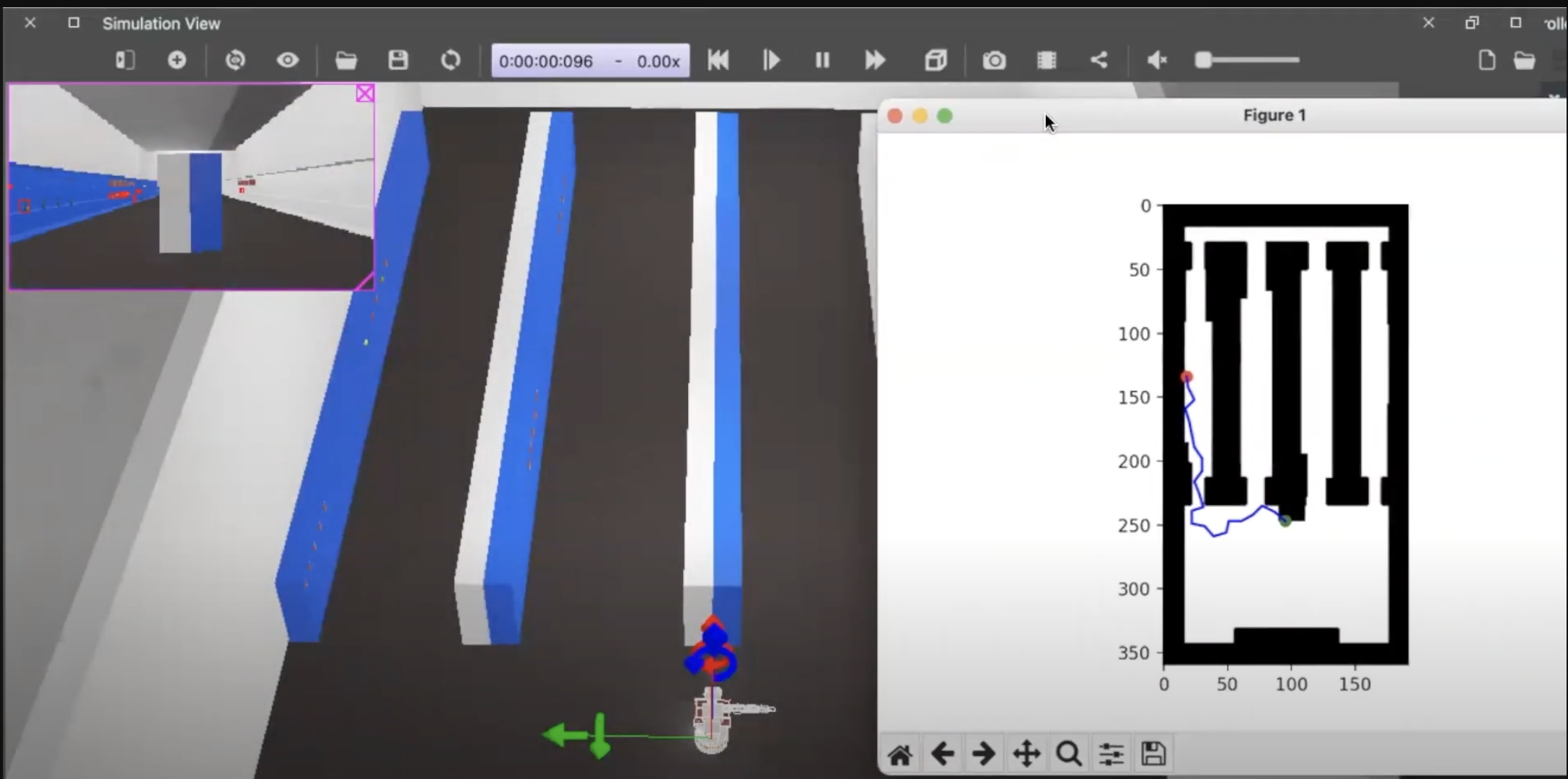

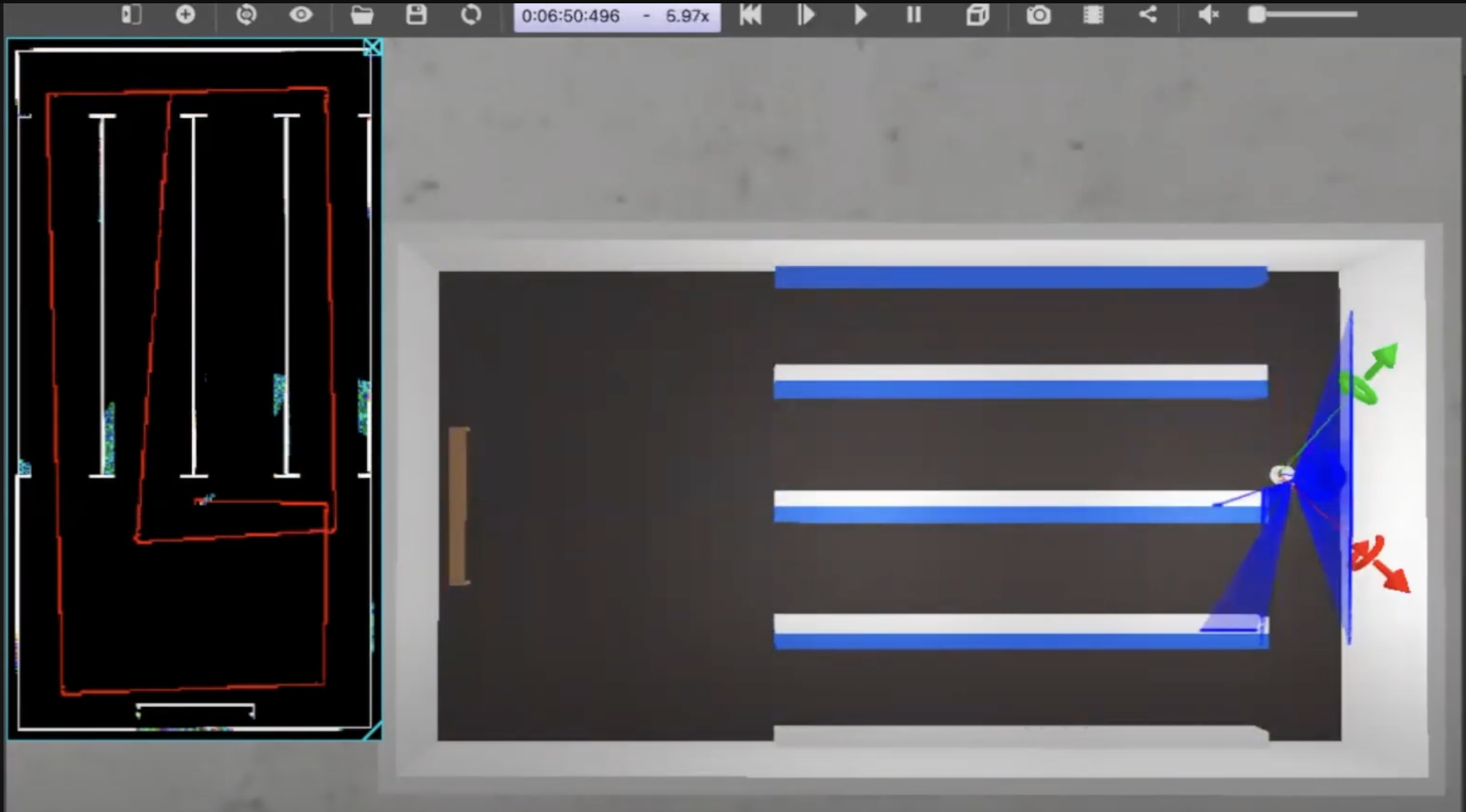

- Developed a 2D occupancy map of the supermarket using LiDAR for precise localization and navigation.

- Implemented Color Blob Detection via OpenCV to identify and localize shelf items visually.

- Used the RRT (Rapidly-exploring Random Tree) algorithm to compute efficient, collision-free paths in dynamic environments.

- Applied Inverse Kinematics to control the robot’s 6-DOF arm for object manipulation in Cartesian space.

- Integrated perception, planning, and control into a cohesive behavior tree for full autonomy.

Combining path planning, vision, and manipulation — the TIAGo robot autonomously retrieves items from the shelf in simulation.

Project Demo

Watch the full demonstration below:

Technical Stack

- Languages: Python, Bash

- Libraries & Tools: ROS2, Webots, OpenCV, NumPy

- Concepts: SLAM, RRT*, Inverse Kinematics, Sensor Fusion, Behavior Trees

Project Repository

View the full code and documentation for this project on GitHub.